In edge computing, network latency is key. Edge-native applications often benefit from the lowest latency and the least latency variation possible. For many applications, network round trip latencies less than 50ms are necessary to provide acceptable user experience. Some applications requiring near real-time control or extremely fast response need even less than 10ms. While we’ve been able to reach these levels in controlled wired and Wi-Fi environments, the performance of commercial 4G LTE networks has been one of the biggest challenges for the Living Edge Lab here at Carnegie Mellon University in Pittsburgh.

In 2017 to support our edge computing research, we built our first private LTE network. Working with Crown Castle and DT, we created a small 4G LTE network using an experimental Band 42 3.5GHz license. The network had three outdoor sites and provided limited coverage near the Carnegie Mellon University campus. While we were able to get better latencies than from commercial carriers, the number and types of commercially available devices for that frequency band, limited coverage, and limited support for the equipment made it difficult to use for much of our edge-native application research. Despite these challenges, the initial testbed provided significant learnings into wireless network architectures and requirements for edge computing.

Fast forward to late-2020. By this time, unlicensed GAA CBRS spectrum was available and commercial 4G LTE network and user equipment for this frequency band was increasingly available. We began a plan with our partners to upgrade the existing network to a modern, supportable network in the CBRS band, covering a broader geographic area with lower latency and greater throughput. Crown Castle, JMA Wireless and Amazon Web Services stepped up to redesign, sponsor and support the building of the network. In mid-June 2021, we collectively brought the full network into service and have begun using it for our research.

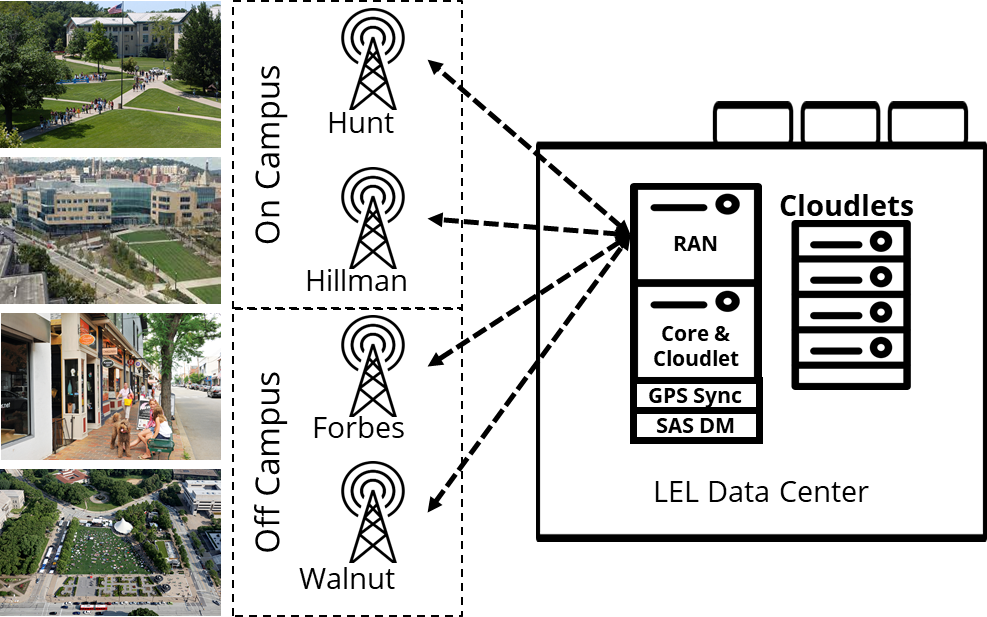

The network (shown below) consists of four outdoor cell sites using JMA CBRS Cell Hubs and directional antennas providing coverage for much of the outdoor space on the main Carnegie Mellon campus, in a public park known as Schenley Plaza near campus, and in an urban shopping district on Walnut Street. JMA deployed their 4G/5G virtualized radio access network (VRAN) on an off-the-shelf Dell/Intel server and worked with AWS to deploy the wireless evolved packet core (EPC) on AWS Snowball . The VRAN and EPC are in our Living Edge Lab data center and connected to the radios over the Crown Castle fiber network. The entire network was constructed and commissioned in less than three months.

Figure 1 – Network Overview

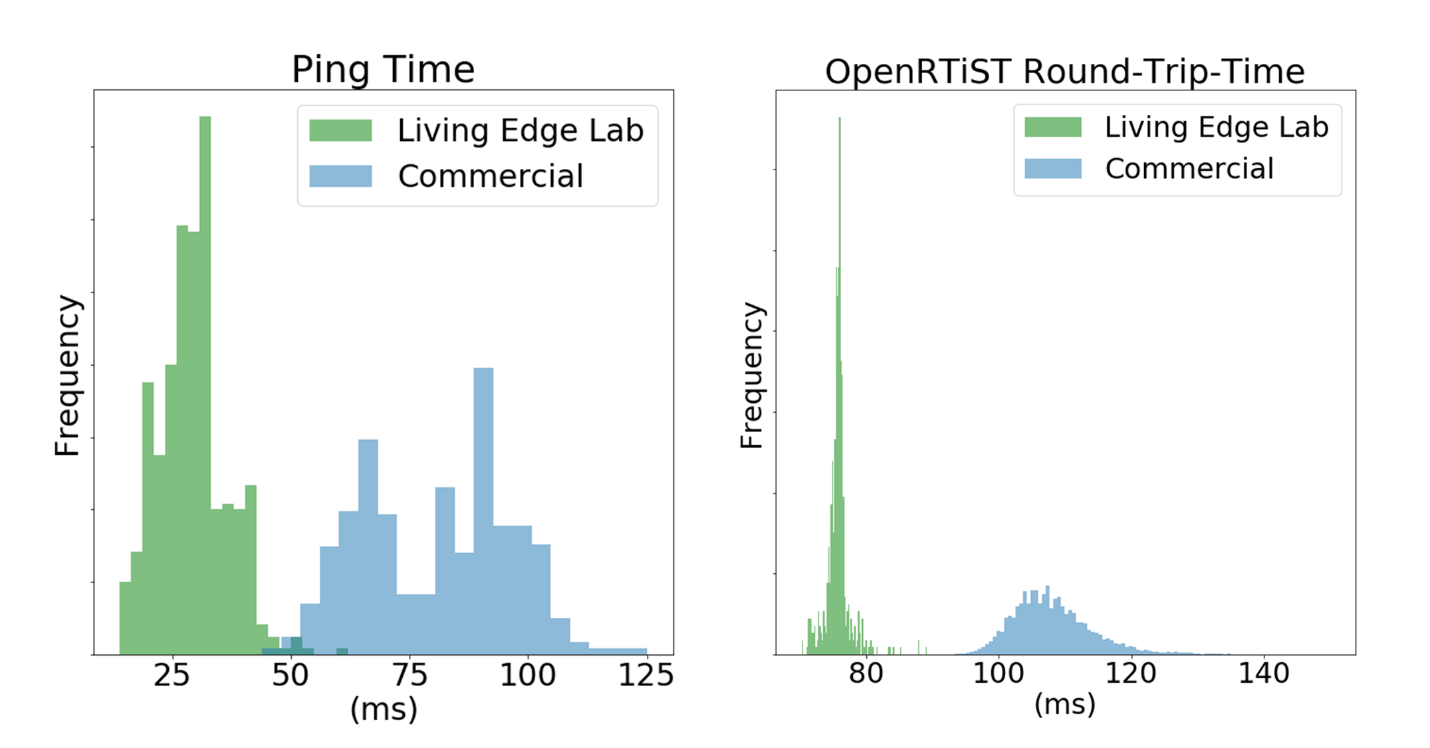

We’re getting great coverage from the network in the areas we’d hoped for; mean latencies are in the 30-35ms range with a 5-8ms standard deviation. Throughput is running 60-80 Mbps download and 15-25 Mbps upload. We’ve also been able to benchmark using our OpenRTiST application and are getting consistently 75ms round trip times and 26 frames per second, not quite as good as Wi-Fi but better than commercial 4G LTE networks – especially when those commercial networks use remote interexchange points (See Figure 2).

Figure 2 – Latency Measurements

Going forward, we are planning to tune the network to reduce the latency even further. One key effort will be upgrading the VRAN and EPC to 5G but we’re also looking at other options. And, we have plans for the network beyond latency reduction. Since we’re big believers in open source, we’re working with Facebook Connectivity to add Magma to the network. We’re considering adding more sites including some indoors. And, of course, we’re expanding our research, student projects and industry collaborations on the network.

I’ll conclude with a special thank you to our industry partners, Crown Castle, JMA Wireless and Amazon Web Services -- who supported the creation of this network – they are true partners in every sense of the word. Also, a shoutout to Druid Software and Federated Wireless who provided the wireless core and SAS service, respectively. It couldn’t have happened without them all. We learned a lot from this experience and we’re happy to share our learnings. If you have questions, feel free to reach out at info@openedgecomputing.org.

Jim Blakley

Jim Blakley